reinforcement learning

-

Robert Grande and Thomas Walsh and Jonathan How

Sample Efficient Reinforcement Learning with Gaussian Processes (pdf)

This paper derives sample complexity results for using Gaussian Processes (GPs) in both model-based and model-free reinforcement learning (RL). We show that GPs are KWIK learnable, proving for the first time that a model-based RL approach using GPs, GP-Rmax, is sample efficient (PAC-MDP). However, we then show that previous approaches to model-free RL using GPs take an exponential number of steps to find an optimal policy, and are therefore not sample efficient. The third and main contribution is the introduction of a model-free RL algorithm using GPs, DGPQ, which is sample efficient and, in contrast to model-based algorithms, capable of acting in real time, as demonstrated on a five-dimensional aircraft simulator.

-

Rich Sutton and Ashique Rupam Mahmood and Doina Precup and Hado van Hasselt

A new Q(lambda) with interim forward view and Monte Carlo equivalence (pdf)

Q-learning, the most popular of reinforcement learning algorithms, has always included an extension to eligibility traces to enable more rapid learning and improved asymptotic performance on non-Markov problems. The lambda parameter smoothly shifts on-policy algorithms such as TD(lambda) and Sarsa(lambda) from a pure bootstrapping form (lambda=0) to a pure Monte Carlo form (lambda=1). In off-policy algorithms, including Q(lambda), GQ(lambda), and off-policy LSTD(lambda), the lambda parameter is intended to play the same role, but does not; on every exploratory action these algorithms bootstrap regardless of the value of lambda, and as a result they fail to approximate Monte Carlo learning when lambda=1. It may seem that this is inevitable for any online off-policy algorithm; if updates are made on each step on which the target policy is followed, then how could just the right updates be `un-made' upon deviation from the target policy? In this paper, we introduce a new version of Q(lambda) that does exactly that, without significantly increased algorithmic complexity. En route to our new Q(lambda), we introduce a new derivation technique based on the forward-view/backward-view analysis familiar from TD(lambda) but extended to apply at every time step rather than only at the end of episodes. We apply this technique to derive first a new off-policy version of TD(lambda), called PTD(lambda), and then our new Q(lambda), called PQ(lambda).

-

Marc Bellemare and Joel Veness and Erik Talvitie

Skip Context Tree Switching (pdf)

Context Tree Weighting (CTW) is a powerful probabilistic sequence prediction technique that efficiently performs Bayesian model averaging over the class of all prediction suffix trees of bounded depth. In this paper we show how to generalize this technique to the class of K-skip prediction suffix trees. Contrary to regular prediction suffix trees, K-skip prediction suffix trees are permitted to ignore up to K contiguous portions of the context. This allows for significant improvements in predictive accuracy when irrelevant variables are present, a case which often occurs within record-aligned data and images. We provide a regret-based analysis of our approach, and empirically evaluate it on the Calgary corpus and a set of Atari 2600 screen prediction tasks.

-

Bruno Da Silva and George Konidaris and Andrew Barto

Active Learning of Parameterized Skills (pdf)

We introduce a method for actively learning parameterized skills. Parameterized skills are flexible behaviors that can solve any task drawn from a distribution of parameterized reinforcement learning problems. Approaches to learning such skills have been proposed, but limited attention has been given to identifying which training tasks allow for rapid skill acquisition. We construct a non-parametric Bayesian model of skill performance and derive analytical expressions for a novel acquisition criterion capable of identifying tasks that maximize expected improvement in skill performance. We also introduce a spatiotemporal kernel tailored for non-stationary skill performance models. The proposed method is agnostic to policy and skill representation and scales independently of task dimensionality. We evaluate it on a non-linear simulated catapult control problem over arbitrarily mountainous terrains.

-

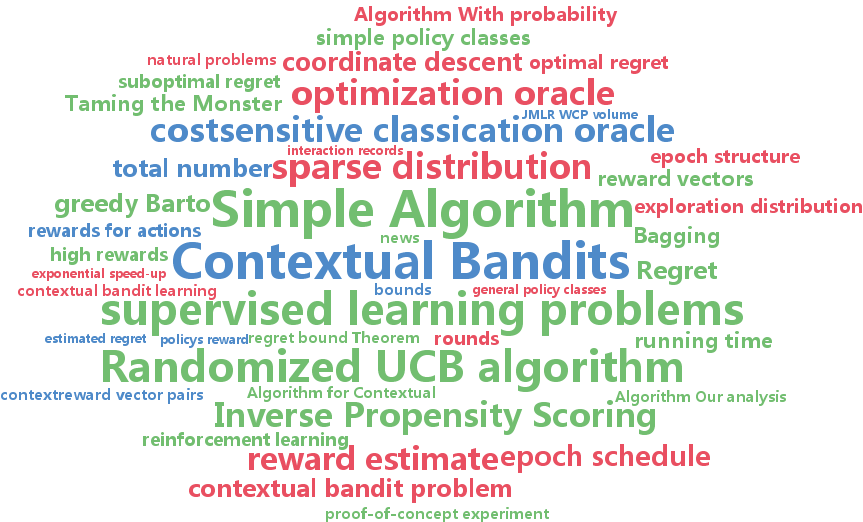

Alekh Agarwal and Daniel Hsu and Satyen Kale and John Langford and Lihong Li and Robert Schapire

Taming the Monster: A Fast and Simple Algorithm for Contextual Bandits (pdf)

We present a new algorithm for the contextual bandit learning problem, where the learner repeatedly takes one of $K$ \emph{actions

-

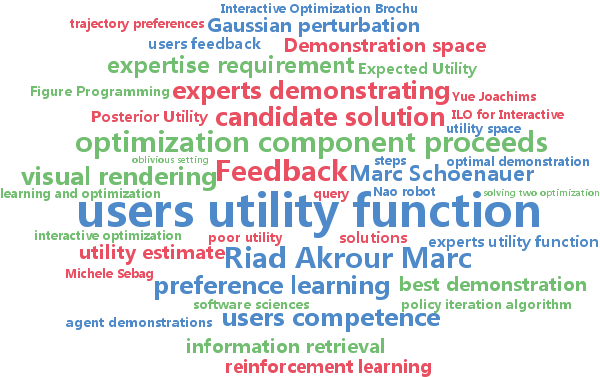

Marc Schoenauer and Riad Akrour and Michele Sebag and Jean-Christophe Souplet

Programming by Feedback (pdf)

This paper advocates a new ML-based programming framework, called Programming by Feedback (PF), which involves a sequence of interactions between the active computer and the user. The latter only provides preference judgments on pairs of solutions supplied by the active computer. The active computer involves two components: the learning component estimates the user's utility function and accounts for the user's (possibly limited) competence; the optimization component explores the search space and returns the most appropriate candidate solution. A proof of principle of the approach is proposed, showing that PF requires a handful of interactions in order to solve some discrete and continuous benchmark problems.

-

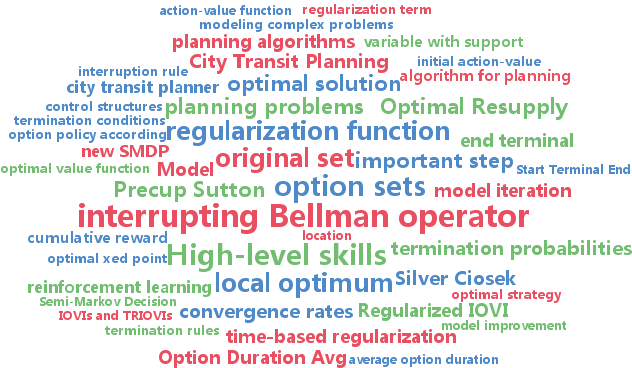

Timothy Mann and Daniel Mankowitz and Shie Mannor

Time-Regularized Interrupting Options (TRIO) (pdf)

High-level skills relieve planning algorithms from low-level details. But when the skills are poorly designed for the domain, the resulting plan may be severely suboptimal. Sutton et al. 1999 made an important step towards resolving this problem by introducing a rule that automatically improves a set of skills called options. This rule terminates an option early whenever switching to another option gives a higher value than continuing with the current option. However, they only analyzed the case where the improvement rule is applied once. We show conditions where this rule converges to the optimal set of options. A new Bellman-like operator that simultaneously improves the set of options is at the core of our analysis. One problem with the update rule is that it tends to favor lower-level skills. Therefore we introduce a regularization term that favors longer duration skills. Experimental results demonstrate that this approach can derive a good set of high-level skills even when the original set of skills cannot solve the problem.

-

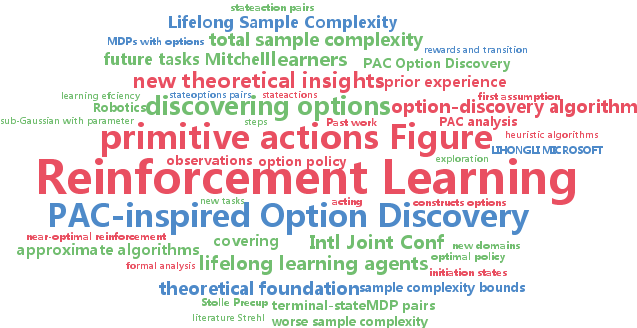

Emma Brunskill and Lihong Li

PAC-inspired Option Discovery in Lifelong Reinforcement Learning (pdf)

A key goal of AI is to create lifelong learning agents that can leverage prior experience to improve performance on later tasks. In reinforcement-learning problems, one way to summarize prior experience for future use is through options, which are temporally extended actions (subpolicies) for how to behave. Options can then be used to potentially accelerate learning in new reinforcement learning tasks. In this work, we provide the first formal analysis of the sample complexity, a measure of learning speed, of reinforcement learning with options. This analysis helps shed light on some interesting prior empirical results on when and how options may accelerate learning. We then quantify the benefit of options in reducing sample complexity of a lifelong learning agent. Finally, the new theoretical insights inspire a novel option-discovery algorithm that aims at minimizing overall sample complexity in lifelong reinforcement learning.