guarantees

-

Yudong Chen and Srinadh Bhojanapalli and Sujay Sanghavi and Rachel Ward

Coherent Matrix Completion (pdf)

Matrix completion concerns the recovery of a low-rank matrix from a subset of its revealed entries, and nuclear norm minimization has emerged as an effective surrogate for this combinatorial problem. Here, we show that nuclear norm minimization can recover an arbitrary $n \times n$ matrix of rank r from O(nr log^2(n)) revealed entries, provided that revealed entries are drawn proportionally to the local row and column coherences (closely related to leverage scores) of the underlying matrix. Our results are order-optimal up to logarithmic factors, and extend existing results for nuclear norm minimization which require strong incoherence conditions on the types of matrices that can be recovered, due to assumed uniformly distributed revealed entries. We further provide extensive numerical evidence that a proposed two-phase sampling algorithm can perform nearly as well as local-coherence sampling and without requiring a priori knowledge of the matrix coherence structure. Finally, we apply our results to quantify how weighted nuclear norm minimization can improve on unweighted minimization given an arbitrary set of sampled entries.

-

Megasthenis Asteris and Dimitris Papailiopoulos and Alexandros Dimakis

Nonnegative Sparse PCA with Provable Guarantees (pdf)

We introduce a novel algorithm to compute nonnegative sparse principal components of positive semidefinite (PSD) matrices. Our algorithm comes with approximation guarantees contingent on the spectral profile of the input matrix A: the sharper the eigenvalue decay, the better the approximation quality. If the eigenvalues decay like any asymptotically vanishing function, we can approximate nonnegative sparse PCA within any accuracy $\epsilon$ in time polynomial in the matrix size $n$ and desired sparsity k, but not in $1/\epsilon$. Further, we obtain a data-dependent bound that is computed by executing an algorithm on a given data set. This bound is significantly tighter than a-priori bounds and can be used to show that for all tested datasets our algorithm is provably within 40%-90% from the unknown optimum. Our algorithm is combinatorial and explores a subspace defined by the leading eigenvectors of A. We test our scheme on several data sets, showing that it matches or outperforms the previous state of the art.

-

Aaditya Ramdas and Javier Peña

Margins, Kernels and Non-linear Smoothed Perceptrons (pdf)

We focus on the problem of finding a non-linear classification function that lies in a Reproducing Kernel Hilbert Space (RKHS) both from the primal point of view (finding a perfect separator when one exists) and the dual point of view (giving a certificate of non-existence), with special focus on generalizations of two classical schemes - the Perceptron (primal) and Von-Neumann (dual) algorithms. We cast our problem as one of maximizing the regularized normalized hard-margin ($\rho$) in an RKHS and %use the Representer Theorem to rephrase it in terms of a Mahalanobis dot-product/semi-norm associated with the kernel's (normalized and signed) Gram matrix. We derive an accelerated smoothed algorithm with a convergence rate of $\tfrac{\sqrt {\log n

-

Philip Bachman and Amir-Massoud Farahmand and Doina Precup

Sample-based approximate regularization (pdf)

We introduce a method for regularizing linearly parameterized functions using general derivative-based penalties, which relies on sampling as well as finite-difference approximations of the relevant derivatives. We call this approach sample-based approximate regularization (SAR). We provide theoretical guarantees on the fidelity of such regularizers, compared to those they approximate, and prove that the approximations converge efficiently. We also examine the empirical performance of SAR on several datasets.

-

Dimitris Papailiopoulos and Ioannis Mitliagkas and Alexandros Dimakis and Constantine Caramanis

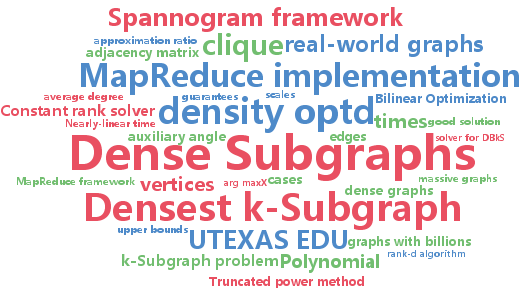

Finding Dense Subgraphs via Low-Rank Bilinear Optimization (pdf)

Given a graph, the Densest $k$-Subgraph (\DkS) problem asks for the subgraph on $k$ vertices that contains the largest number of edges. In this work, we develop a novel algorithm for \DkS{

-

Xiaotong Yuan and Ping Li and Tong Zhang

Gradient Hard Thresholding Pursuit for Sparsity-Constrained Optimization (pdf)

Hard Thresholding Pursuit (HTP) is an iterative greedy selection procedure for finding sparse solutions of underdetermined linear systems. This method has been shown to have strong theoretical guarantees and impressive numerical performance. In this paper, we generalize HTP from compressed sensing to a generic problem setup of sparsity-constrained convex optimization. The proposed algorithm iterates between a standard gradient descent step and a hard truncation step with or without debiasing. We prove that our method enjoys the strong guarantees analogous to HTP in terms of rate of convergence and parameter estimation accuracy. Numerical evidences show that our method is superior to the state-of-the-art greedy selection methods when applied to learning tasks of sparse logistic regression and sparse support vector machines.